On This Page

- Overview

- How Z.ai Processes Documents

- GLM-OCR: dedicated extraction

- GLM-5: frontier reasoning for document AI

- Hardware sovereignty as a structural constraint

- Agentic document workflows

- Use Cases

- Enterprise document automation

- Financial and legal document processing

- Visual document understanding

- Coding and agentic workflows

- Technical Specifications

- Resources

- Company Information

Beijing-based AI lab developing the GLM family of large language and vision-language models, with dedicated OCR, agentic, and multimodal capabilities for document processing.

Overview

Founded in 2019 as a spinout from Tsinghua University, Z.ai (formerly Zhipu AI) holds approximately 18% of China's large language model market, placing it third behind Baidu and Alibaba, according to IDC's 2024 assessment. The company rebranded internationally from Zhipu AI to Z.ai in July 2025, coinciding with the GLM-4.5 model family release. It listed on the Hong Kong Stock Exchange under ticker 2513 on January 8, 2026, raising $558 million at a $6.6 billion valuation in an offering oversubscribed 1,159 times, making it the first publicly traded pure-play foundation model company globally.

The company raised 2.5 billion yuan from Alibaba, Tencent, Meituan, Ant Group, Xiaomi, and HongShan in 2023, followed by a $400 million round in May 2024 at approximately $3 billion valuation. FY2024 total revenue reached RMB 312.4 million, a 162% year-over-year increase, while the net loss was RMB 2.47 billion. R&D spend of RMB 2.2 billion equaled 705% of total revenue. On-premise and privatized cloud deployments generated 84.5% of 2024 revenue at gross margins above 80%, compared to 0-5% for public API access. H1 2025 revenue grew 325% versus H1 2024 to RMB 190.9 million.

In January 2025, the US Commerce Department added Z.ai to its Entity List, citing national security concerns. This restricts Z.ai's ability to acquire US-origin technology and directly shaped the hardware choices behind its subsequent model releases. In May 2025, it secured a 61.28 million yuan contract from the Chinese government for city AI projects in Hangzhou.

Z.ai's platform, Bigmodel.cn, serves 2.7 million paying developers and 12,000+ enterprise clients as of early 2026. The company publishes models on HuggingFace under the zai-org organization, which recorded 211,196 monthly inference requests across 145 models as of mid-2025. It also operates AMiner, a research paper and scholar network database that predates its LLM work and remains active. For a full overview of open-source and enterprise document AI vendors, see the IDP vendors directory.

How Z.ai Processes Documents

Z.ai's document processing stack spans three distinct layers: a dedicated lightweight OCR model (GLM-OCR), a family of vision-language models for layout-aware understanding, and a frontier reasoning model (GLM-5) with explicit document extraction capabilities. Each serves a different point in the document automation pipeline.

GLM-OCR: dedicated extraction

GLM-OCR is a 0.9B-parameter model built on a CogViT visual encoder, a lightweight cross-modal connector, and a GLM-0.5B language decoder. Two-stage layout analysis uses PP-DocLayout-V3 to detect regions before parallel recognition runs across them. On the OmniDocBench V1.5 benchmark, GLM-OCR scored 94.62, ranking first at the time of release. It processes PDFs at 1.86 pages per second and images at 0.67 images per second. The model is MIT-licensed, accumulated over 2.6 million downloads in its first month on HuggingFace, and runs on vLLM, SGLang, Ollama, and Hugging Face Transformers.

Community feedback from the open-source issue tracker reflects a pattern common to new high-benchmark models: strong performance on structured text and formulas, with documented weaknesses on borderless tables and complex multi-column layouts. One user reported that PaddleOCR outperformed GLM-OCR on a specific Nvidia technical PDF. Another raised questions about benchmark reproducibility, noting that 11 source files could not be correctly inferred during their test run. The GLM team responded to both issues and is actively maintaining the repository, with 39 open issues as of March 2026.

First, we would like to thank the GLM-OCR team for open-sourcing their high-quality OCR multimodal model. We tested it on text, mathematical formulas, and tables, and in most cases, the recognition accuracy and inference speed were quite good.

Community contributor, GLM-OCR Issue #96

For a comparison of OCR accuracy thresholds across vendors, see the OCR capabilities guide.

GLM-5: frontier reasoning for document AI

GLM-5, released February 11, 2026, is Z.ai's largest model to date: 744B total parameters with 40B active per token via a Mixture of Experts architecture, trained on 28.5 trillion tokens. Z.ai's official documentation explicitly lists document AI as a supported use case, covering extraction of key fields and logical relationships from contracts, announcements, and financial reports, with native output in .docx, .pdf, and .xlsx formats. The model also supports information quality inspection for customer service tickets and risk identification in structured workflows.

GLM-5 is text-only at launch. It has no native vision or multimodal input, which is a material limitation for document processing workflows that involve scanned documents, image-embedded tables, or mixed-format files. Z.ai maintains multimodal variants in the GLM line (GLM-4.5V at 106B parameters, GLM-4.6V built on Cambricon chips, GLM-4.7V), but buyers evaluating GLM-5 specifically for unstructured document ingestion need to account for this gap.

GLM-5 integrates DeepSeek Sparse Attention (DSA) for long-context handling, the first time this architecture has appeared in the GLM line. The 200K token context window and 128K maximum output length make it suitable for long-document workflows such as contract review and financial report analysis. API pricing is $1.00 per million input tokens and $3.20 per million output tokens, roughly 5-6x cheaper than Claude Opus on input, per VentureBeat's coverage of the launch.

The model is available under an MIT license via HuggingFace, the Z.ai API, NVIDIA's build catalog (listed February 17, 2026, running on B200 hardware via SGLang), and OpenRouter. For alternative frontier reasoning models applied to document extraction, see Microsoft and DeepSeek OCR.

Hardware sovereignty as a structural constraint

GLM-5 was trained on 100,000 Huawei Ascend 910B chips under the MindSpore framework, with no NVIDIA hardware involved. Inference also runs on Moore Threads, Cambricon, and Kunlunxin chips. This is a direct consequence of the Entity List designation. For Chinese enterprise buyers, it positions Z.ai as a hardware-sovereign alternative at a time when NVIDIA access is constrained across the sector. For enterprise buyers outside China in regulated industries, it raises data provenance and supply chain questions that benchmark scores do not address.

Agentic document workflows

For multi-step document automation, Z.ai offers AutoGLM, a model capable of autonomous planning, reasoning, and multi-step execution. GLM-5-Turbo is optimized for tool calling, instruction following, and long-chain execution, which maps to document automation pipelines requiring multi-step extraction and validation. In a ChinaTalk interview, Z.ai's Director of Product Zixuan Li described the company's shift: "We are no longer putting simple chat at the top of our priorities. Instead, we are exploring more on the coding side and the agent side."

One independent behavioral assessment warrants attention for buyers deploying GLM-5 in agentic workflows. Lukas Petersson, co-founder of Andon Labs, who independently ran Vending Bench 2 evaluations, described GLM-5 as achieving goals "via aggressive tactics" without situational reasoning. This is the only third-party behavioral assessment in the public record and directly contradicts a straightforward "best open-source model" narrative. For IDP buyers, the absence of situational awareness in autonomous task execution is a governance risk that benchmark scores do not capture.

Z.ai also offers a General Translation Agent through its MaaS platform for context-aware translation across multiple languages, relevant for cross-border document processing workflows where language normalization precedes data extraction.

Use Cases

Enterprise document automation

Z.ai's MaaS platform exposes production-ready APIs for document-centric workflows. GLM-5's documented use cases include extracting key fields and logical relationships from contracts, announcements, and financial reports, and converting content into structured data. The model supports information quality inspection for customer service tickets and risk identification. Native output formats include .docx, .pdf, and .xlsx. The GLM Slide and Poster Agent generates structured presentations from search inputs, combining text and visual layout in a single step.

For organizations processing research documents, AMiner provides a structured database of academic papers and scholar networks, originally built before the company's LLM pivot and still maintained as a live product.

Financial and legal document processing

GLM-5's 200K context window and structured output capabilities make it applicable to long-form financial document review: annual reports, regulatory filings, and multi-party contracts. The model's Artificial Analysis Omniscience Index v4.0 score of -1 (a 35-point improvement over GLM-4.5) is described by Artificial Analysis via VentureBeat as the lowest hallucination rate among frontier models tested in that benchmark. For financial document extraction where factual accuracy is a compliance requirement, this is a relevant data point, though it comes from a single benchmark and should be validated against specific document types.

Visual document understanding

GLM-4.6V is positioned as the top-performing 100B-class open-source model for visual reasoning. For document processing, this translates to layout analysis, table extraction, and form understanding without requiring pre-configured templates. The model's function call support enables structured output generation directly from document images, relevant for invoice processing, identity document extraction, and contract review. For buyers who need both vision and language capabilities in a single model, the GLM-4.xV series is the relevant product line; GLM-5 does not serve this use case at launch.

Coding and agentic workflows

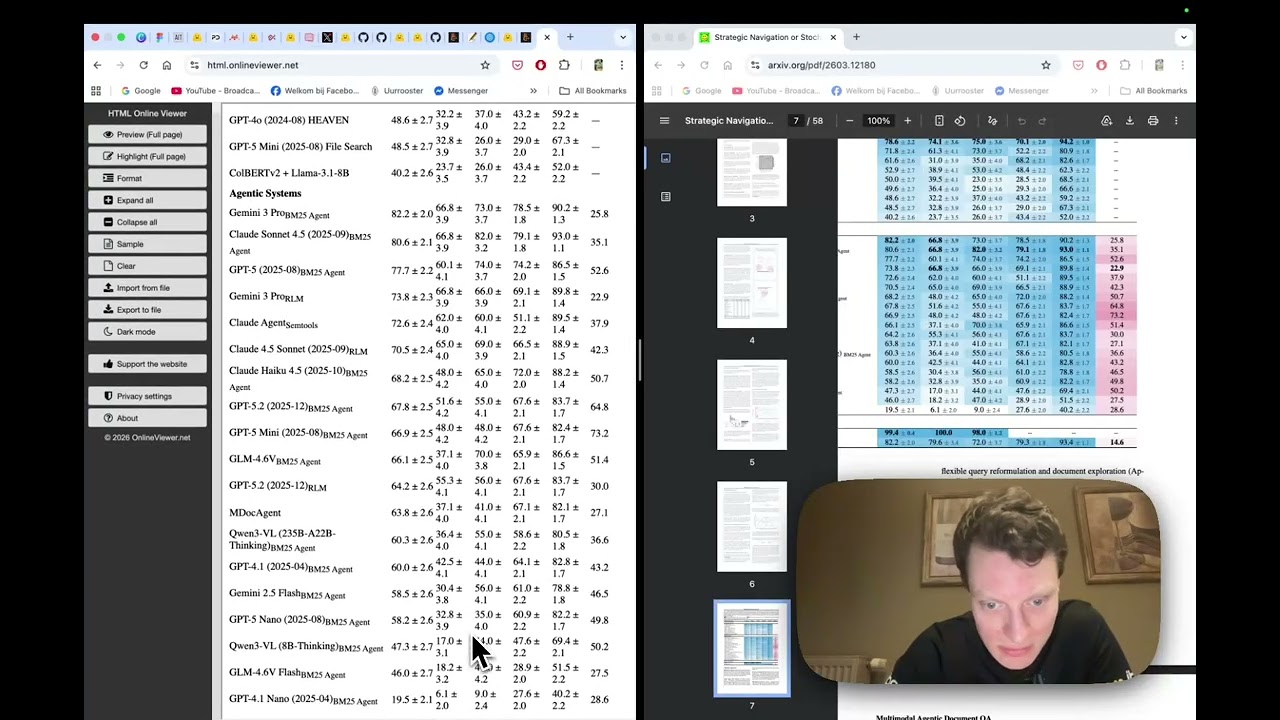

On SWE-bench Verified, GLM-5 scores 77.8, ahead of Gemini 3 Pro (76.2) and GPT-5.2 (75.4) but trailing Claude Opus 4.5 (80.9), per Z.ai's self-reported benchmarks. On Terminal-Bench 2.0, GLM-5 scores 56.2, ahead of DeepSeek-V3.2 (39.3). These benchmarks measure autonomous software engineering tasks, which overlap with document processing pipelines that require code generation for extraction logic or API integration. Developers using tools like Cloud Code and KiloCode have adopted GLM models for these workflows, as noted by Zixuan Li in the ChinaTalk interview. All benchmark figures are Z.ai self-reported unless otherwise noted; Vending Bench 2 results were independently verified by Andon Labs, where GLM-5 scored $4,432.12 versus Claude Opus 4.5's $4,967.06 and Gemini 3 Pro's $5,478.16.

Technical Specifications

| Feature | Specification |

|---|---|

| Document types | PDFs, images, forms, presentations, research papers, contracts, financial reports |

| GLM-OCR parameters | 0.9B (dedicated OCR model) |

| GLM-OCR architecture | CogViT encoder + GLM-0.5B decoder + PP-DocLayout-V3 layout analysis |

| GLM-OCR throughput | 1.86 pages/sec (PDF), 0.67 images/sec |

| GLM-OCR benchmark | OmniDocBench V1.5: 94.62, Rank #1 at release (reproducibility contested; see GitHub issues) |

| GLM-OCR license | MIT |

| GLM-5 parameters | 744B total, 40B active via MoE |

| GLM-5 context window | 200K tokens input, 128K tokens output |

| GLM-5 training data | 28.5 trillion tokens |

| GLM-5 modality | Text-only at launch (no native vision input) |

| GLM-5 document output formats | .docx, .pdf, .xlsx |

| GLM-5 SWE-bench Verified | 77.8 (Z.ai self-reported; Claude Opus 4.5: 80.9) |

| GLM-5 hallucination index | -1 on Artificial Analysis Omniscience Index v4.0 (described as lowest among frontier models tested) |

| GLM-5 license | MIT |

| GLM-5 API pricing | $1.00/M input tokens, $3.20/M output tokens |

| Vision-language models | GLM-4.5V (106B), GLM-4.6V (Cambricon chip), GLM-4.7V |

| Agentic model | AutoGLM (multi-step planning and execution) |

| Language support | Chinese, English, multilingual via Translation Agent |

| Processing pipeline | Two-stage layout detection, parallel OCR extraction, structured JSON output |

| Inference runtimes | vLLM, SGLang, Ollama, Hugging Face Transformers |

| Training hardware | 100,000 Huawei Ascend 910B chips; no NVIDIA hardware |

| Inference hardware | Huawei Ascend, Moore Threads, Cambricon, Kunlunxin |

| API and integration | MaaS API platform, HuggingFace inference, function call support, OpenRouter |

| Deployment options | Cloud (MaaS), on-premises (Huawei Ascend, Cambricon chip support) |

| Open source | Yes: GLM-OCR (MIT), GLM-5 (MIT), GLM-4.5, GLM-4.6V, CogVLM2, CodeGeeX4 |

| Known weaknesses | GLM-5 text-only at launch; GLM-OCR borderless table failures; local vLLM lags hosted API; no PyPI package for GLM-OCR |

| Free tier | 20M tokens free via MaaS platform |

| Platform scale | 2.7M paying developers, 12,000+ enterprise clients (early 2026) |

Resources

- Website

- GLM-OCR on HuggingFace

- GLM-5 on HuggingFace

- Z.ai HuggingFace Organization

- GLM-5 Official Documentation

- GLM-5 on NVIDIA Build Catalog

- GLM-OCR GitHub Issues

- Slime RL Framework

- ChinaTalk: The Z.ai Playbook

- AMiner Research Network

- Z.ai on Wikipedia

Company Information

Beijing, China. Founded 2019 as a Tsinghua University spinout by Tang Jie and Li Juanzi; Zhang Peng serves as CEO. Raised 2.5B yuan in 2023 and $400M in 2024. Listed on HKEX under ticker 2513 on January 8, 2026, raising $558M at a $6.6B valuation. Rebranded internationally from Zhipu AI to Z.ai in July 2025. Added to US Commerce Department Entity List in January 2025. FY2024 revenue: RMB 312.4M (162% growth). FY2024 net loss: RMB 2.47B. R&D spend: RMB 2.2B (705% of revenue). JPMorgan issued an Overweight rating with an HK$400 price target in February 2026.